Microsoft and Google DeepMind Researchers Propose ‘Agentic Risk Standard’ for AI Transactions

Researchers from Microsoft and Google DeepMind have introduced the Agentic Risk Standard (ARS) to provide financial safeguards and underwriting for autonomous AI agents.

- Researchers from Microsoft Research, Google DeepMind, and Columbia University have proposed the Agentic Risk Standard (ARS), a framework for managing financial risks in autonomous AI transactions.

- The protocol introduces escrow, underwriting, and collateralization to protect users from financial losses when AI agents execute tasks involving real assets.

- Separately, Bitcoin wallet provider Nunchuk released open-source tools to grant AI agents “bounded authority,” requiring human approval for transactions exceeding set limits.

A cross-institutional group of researchers from Microsoft Research, Google DeepMind, and Columbia University has released a proposal for the Agentic Risk Standard (ARS), a settlement-layer framework designed to add financial safeguards to autonomous AI tasks. The paper, titled “Quantifying Trust: Financial Risk Management for Trustworthy AI Agents,” argues that existing AI safety techniques provide only probabilistic reliability, leaving a “guarantee gap” that prevents users from delegating high-stakes financial responsibilities to AI.

The Agentic Risk Standard seeks to bridge this gap by applying traditional financial risk management principles to AI. For standard service tasks, such as generating reports or writing code, the framework utilizes escrow accounts where payments are only released upon verification of the work. For more complex “fund-handling” tasks—including trading and currency conversion—the ARS introduces a dedicated underwriting layer. In this model, a risk-bearing party evaluates the task, prices the potential risk, and commits to reimbursing the user if the agent fails or misexecutes the instruction.

“The industry is building increasingly autonomous AI agents but hasn’t addressed what happens when they fail with someone’s money,” said Chandler Fang, founder of t54 Labs and a co-author of the proposal. “Financial risk management for AI agents isn’t optional—it’s foundational.” Simulations conducted by the researchers suggests that agent underwriting services could reduce user losses by up to 61%, while collateral requirements could deter nearly 20% of high-risk transactions from occurring.

The move comes as the industry grapples with the rise of “sandbox economies,” a concept highlighted in recent Google research where AI agents interact and trade in environments that could potentially spill over into real-world markets. To combat these risks at the infrastructure level, Bitcoin wallet firm Nunchuk has launched open-source tools including the Nunchuk CLI and “Agent Skills” repositories. These tools enable AI agents to manage Bitcoin wallets under a “bounded authority” model.

Under Nunchuk’s framework, AI agents can perform routine tasks but are restricted by multisignature policies. If an agent attempts a transaction that exceeds a user-defined spending limit, it requires an explicit signature from the human owner to proceed. This ensures that while agents can operate autonomously within safe parameters, they do not have unilateral control over private keys or total fund balances.

Disclaimer: This article is for informational purposes only and does not constitute advice of any kind. Readers should conduct their own research before making any decisions.

© Cryptopress. For informational purposes only, not offered as advice of any kind.

Latest Content

Lo Último

- Tether Leads $150 Million Recovery Initiative for Drift Protocol Following $270 Million Exploit

- Polkadot Leads Social Discourse Thanks to Hyperbridge

- Tom Lee’s BitMine Reports $3.8 Billion Quarterly Loss Following Ethereum Price Drop

- Bitcoin Faces $76K Resistance as Exchange Inflows Surge to Multi-Month Highs

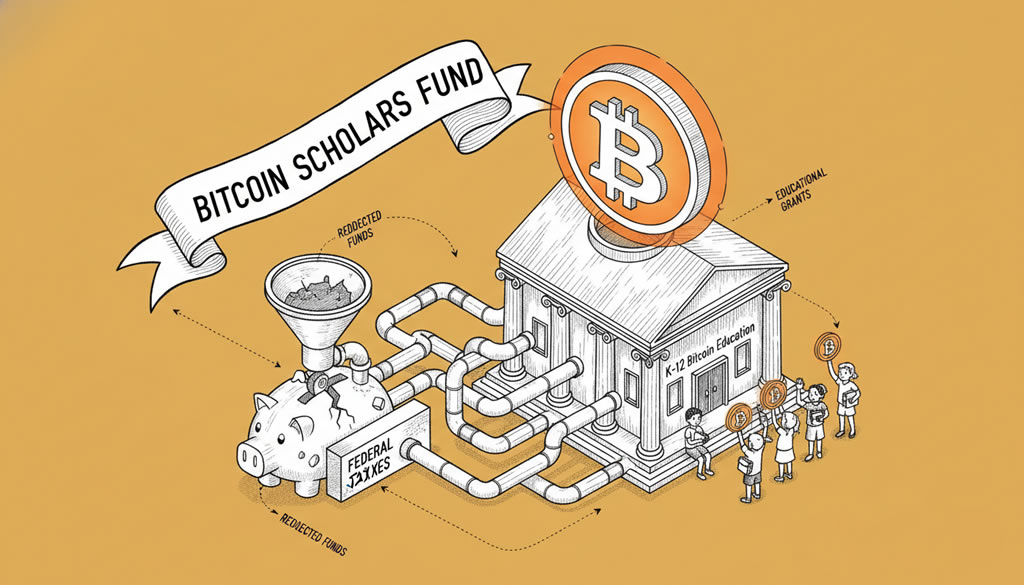

- Bitcoin Scholars Fund Launches to Redirect $21M Federal Taxes to K-12 Bitcoin Education

Related

- AI Agents Are About to Own Crypto Commerce AI Agents Are About to Own Crypto Commerce: How x402, Messari, and AI-Native Protocols Are Building Machine Economies....

- Crypto portfolios of top analysts and influencers in June 2025 The portfolios of prominent crypto analysts and influencers play a key role in shaping public sentiment and investment decisions. (This is not financial advice.)...

- ERC-8004: Ethereum’s Bid to Create a Secure AI Economy Ethereum's new ERC-8004 standard introduces portable identities and reputations for AI agents, fostering trustless interactions and a decentralized AI economy....

- Top 7 Best Crypto Presales to Buy in 2025: Token Sales & Equity Opportunities Revolutionizing Industries Top 7 best crypto presales to buy in 2025. Token sales & equity opportunities solving real problems. MovitOn, NGRAVE, Datai Network reviewed....